Disable “Thinking,” Still Get Thousands of Tokens: What Instruct LLMs Are Doing

Token caps, not labels, explain many benchmark gaps.

Today, LLMs are commonly released in two flavors:

Instruct models: optimized to follow user instructions and produce a direct, user-facing answer. They may use some internal computation, but they are generally marketed and expected to respond without long intermediate “workings,” keeping latency and token usage low.

Reasoning / Thinking models: optimized to solve harder problems by spending more test-time compute, often by generating long intermediate traces (plans, self-checks, scratch work) before producing a final answer. They typically perform better on difficult tasks, but at a much higher inference cost.

Some providers also ship a single model with a “Thinking” mode that can be toggled on/off (for example, early Qwen3 releases, Qwen3.5, Nemotron 3 Nano, etc.).

In principle, the Thinking variants should outperform Instruct variants on challenging benchmarks that require multi-step reasoning, while also being much more expensive to run, often generating 10x to 20x more tokens.

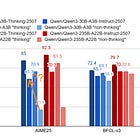

But in late 2025, something odd started to happen: models labeled “Instruct” began performing significantly better on very difficult reasoning-heavy tasks like AIME (math) and GPQA Diamond (science MCQ). A concrete example we’ve already discussed is Qwen3. The first version, with Thinking “off,” performed moderately on AIME, yet the later Qwen3 4B Instruct 2507 unexpectedly jumped by nearly 30 accuracy points.

What changed?

In practice, Qwen3 Instruct “thinks” anyway when it sees AIME-like prompts. It produces thousands of tokens, self-questions, hesitations, partial attempts, before finally giving an answer. And this isn’t unique to Qwen: several recent “Instruct” models I’ve evaluated do the same. They generate an “inner” monologue that is hard to suppress, often useless to the user, and sometimes actively misleading.

This has two important consequences:

Cost: “Instruct” models that are supposed to be cheap can become expensive in the wild, simply because they generate far more tokens than expected.

Measurement: It blurs what we actually mean by “non-thinking” performance. If an Instruct model quietly uses a large hidden reasoning budget, then benchmark results no longer reflect the model’s capabilities without test-time scaling.

That’s the main motivation for this article: answering a simple question:

If a model is truly not allowed to think, how well do LLMs perform in 2026 on complex tasks?

My second motivation is cost optimization. If an “Instruct” model has a hidden reasoning budget, identifying that budget becomes critical for controlling inference spend. Suppose a model typically succeeds after ~10k tokens of “thinking” (already huge for an Instruct model). In that case, you might cap the maximum generation length at, say, 15k tokens: if it hasn’t converged by then, it’s probably not going to, and you can stop early instead of letting it run indefinitely and burn compute. (This is a strong assumption, but it can be extremely cost-effective in production.)

To study this, I created a new evaluation benchmark, AIME-Instruct, which simply reuses the AIME evaluation sets but introduces a new prompt and rules designed to better isolate non-thinking behavior.

I then compared several recent “Instruct” models, Qwen3 Instruct 2507 (4B), Ministral 3 Instruct, RNJ-1, OLMo 3 Instruct, and others, under multiple “thinking budgets,” including an uncapped budget that approximates today’s default behavior.

To separate genuine progress from test-time scaling, I also included older reference points like Llama 3.1 8B, Gemma 3, and early Qwen3 versions with Thinking explicitly turned off.

Key finding: Many “Instruct” models rely on a non-trivial implicit reasoning budget to solve AIME-level questions, and that budget varies widely across models. As a result, comparing accuracy alone on these benchmarks is increasingly misleading unless we also report how many tokens the model had to generate to reach that accuracy. The inference cost of “instruct” models with similar numbers of parameters can vary widely.

In this article, I first discuss the details of how I made AIME-Instruct, and analyze the results for all the models I tested. In conclusion, I provide some recommendations to reduce inference costs without leaving accuracy on the table.